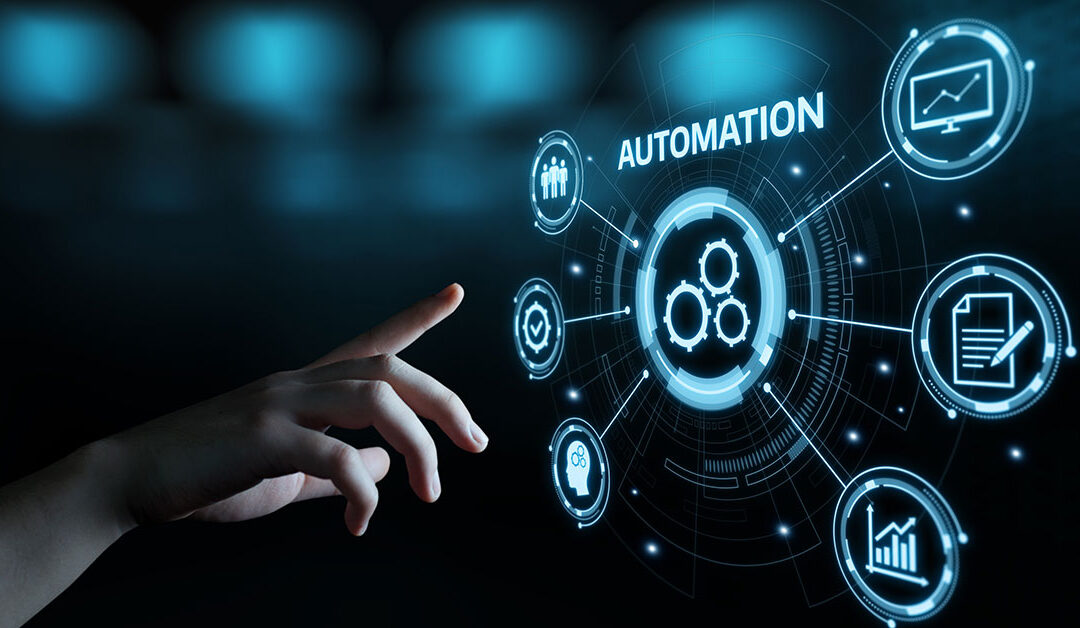

Unlocking Business Growth Through Software Automation

In challenging times, businesses must find ways to maintain operations while...

ZAG Strengthens Business IT Strategies for 2025

For many small and mid-sized businesses, the importance of IT in daily...

Strengthening Cybersecurity in the Fresh Produce Industry

During Cybersecurity Awareness Month, ZAG remains focused on protecting the nation’s food supply from cyberattacks.

Stay Cyber Safe on Your Summer Vacation

Security tips and IT best practices for executive travel, whether on summer vacation or abroad on business.

Safeguarding Ag Against Cyber Threats

The critical need for the Ag sector to acknowledge cybersecurity challenges accompany tech advancements.

The Whole Nine Yards, ZAG’s New Service Offering

Securing our food supply chain is important business. An ag cybersecurity assessment is one way to achieve this goal.

How Agriculture is Navigating Cybersecurity Challenges

Ag leaders discuss how the industry is navigating cybersecurity challenges at AgSafe ACTIVATE24.

The Top 5 Reasons You Need a Cybersecurity Assessment

80% of U.S. companies have been successfully hacked. Here’s the solution.

Infographic: Where is the Supply Chain Risk?

The Ag supply chain uses technology from farm to table. Which introduces risk. Here’s how.

Analysis: The Farm and Food Cybersecurity Act of 2024

New Cybersecurity Act to protect food supply chain through simulation of food-related emergencies, disruptions.

Marketing Talks IT and Driving Business Performance

Marketing + IT. An unlikely pairing? The similarities as drivers of business outcomes is closer than many think.

The Vital Role Standards Play in Ag Cybersecurity

ZAG discusses cyber standards with Johnny McGuire at ProduceSupply.org (and IT Director at Nunes).

Outsource Cybersecurity to a MSP and Protect Your Business

Companies that outsource cybersecurity benefit from lessons from hundreds of MSP customers.

Planning for a More Competitive Future

Ag IT leaders, use these tips from ZAG’s CFO to get your IT budget approved.

Could AI Affect Your Ag Job and Career?

AI is forcing a conversation about what Ag industry employers expect from their employees.

Accelerating Climate-Smart Agtech

We speak with Vonnie Estes, IFPA’s VP of Innovation, about nurturing climate-smart companies.

How Agtools Ag Data Shapes Decision Making

Data should be considered in every strategic plan says Martha Montoya, Agtools CEO.

How Uniting Farmers and AgTech Innovators Builds Success

Dennis Donohue discusses how companies can encourage the implementation of new tech in ag.